Fuego Jamboree 3

Introduction [edit section]

The Fuego Jamboree is a technical meeting of the Fuego Test System development community. The purpose of these meetings is to share ideas and plan new features and improvements for the Fuego Test System. We are having our third Fuego Jamboree in July, 2019!Note that there is no fee to attend this event, and it is open to the public.

Pictures from the Event [edit section]

The fun picture:

The serious picture: (Not that good of Tim :( )

Event Details [edit section]

Fuego Jamboree #3 was held:- Date: Saturday, July 20 2019

- Time: 9:00 am to 4:00 pm

- Location: Tokyo, Japan

- Venue: Toyosu campus of Shibaura Institute of Technology.

- Address : 3-7-5 Toyosu, Koto-ku, Tokyo 135-8548

- Room: 5F 511 schoolroom building (������)

- See the map

Please join the Fuego mailing list to get the latest announcements.

Previous events [edit section]

Information about the previous Fuego Jamboree is here: Fuego_Jamboree_2You can also see Presentations from previous meetings and other events.

Topics [edit section]

This list is tentative...

- Status and roadmap

- Features in latest release

- What's being worked on

- Demos

- Hardware testing

- Performance

- New tests

- Status of community

- Customers (AGL, LTSI, LTS, CIP)

- Outreach (marketing)

- Adoption of test standards

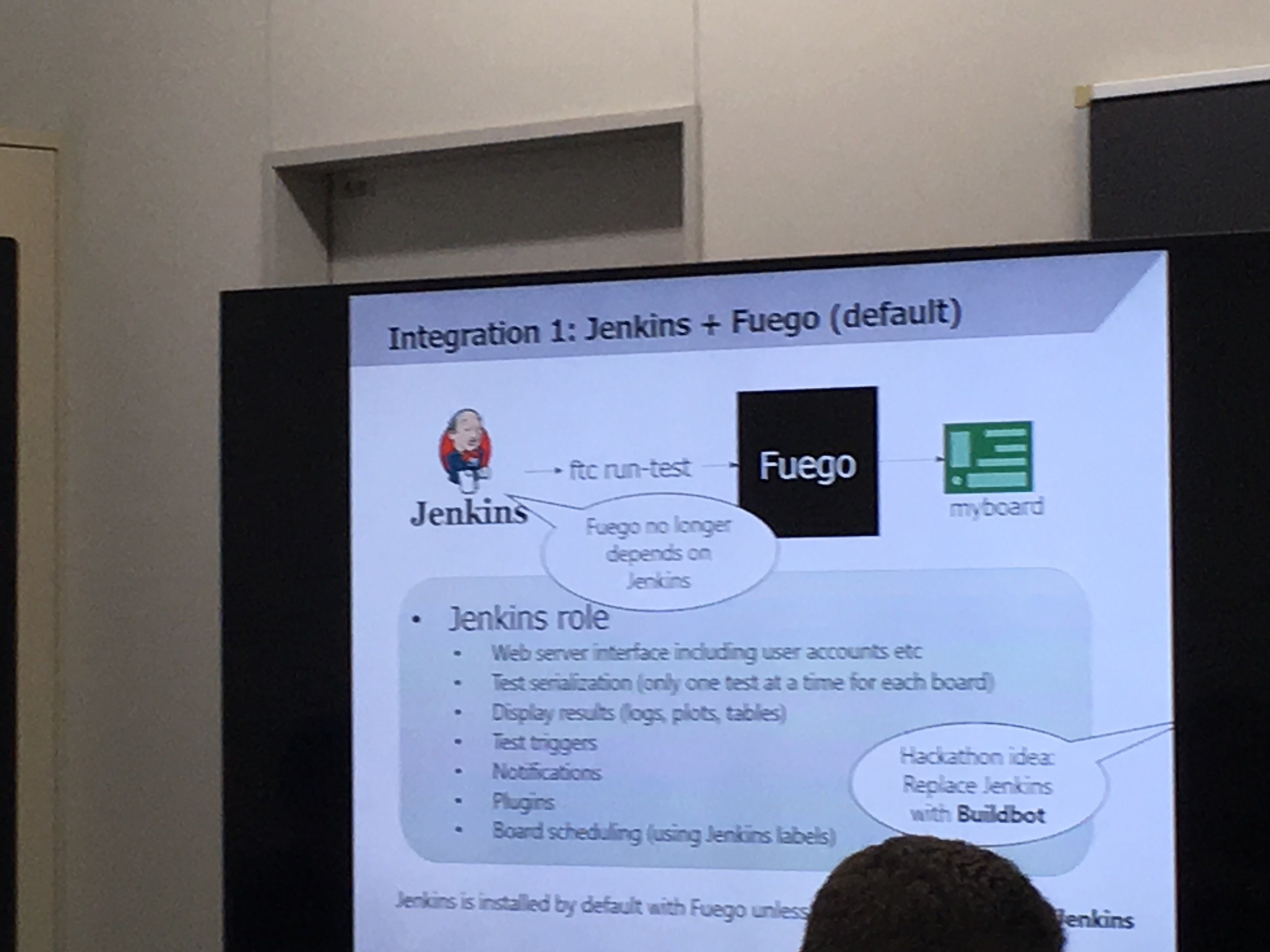

- Integration with other test systems

- LAVA, LKFT, labgrid, ttc, pdudaemon, etc.

- Reports of usage

- Problems encountered, and how they were overcome

Schedule [edit section]

| Time | Presenter | Topic | Slides |

| 9:00-9:20 | Tim | Welcome and Introduction | Media:FJ3-Fuego-Jamboree-welcome.pdf |

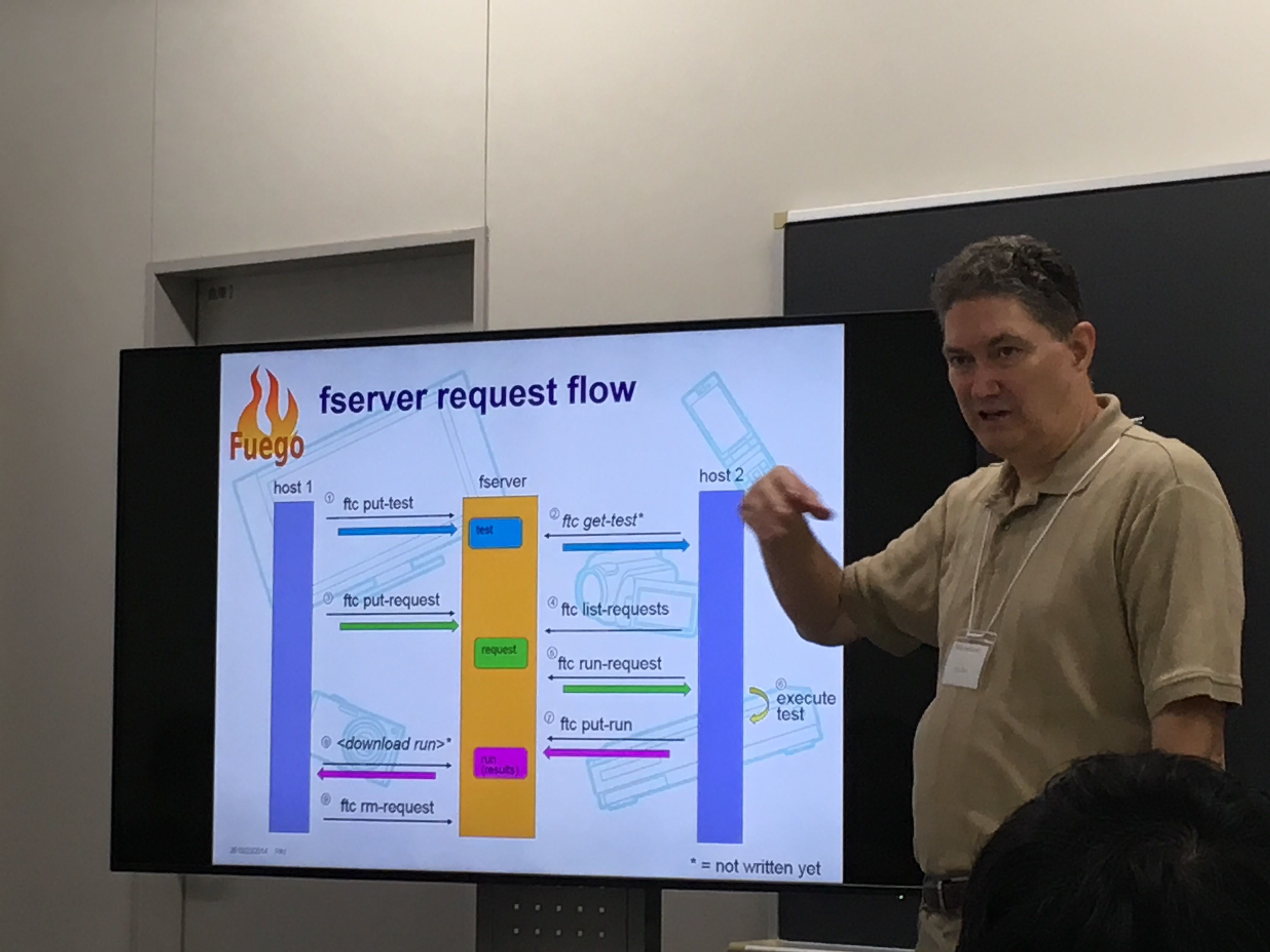

| 9:20-10:00 | Tim | 1.5 Feature review | Media:FJ3-Fuego-1.5-Features-Status-2019-07.pdf |

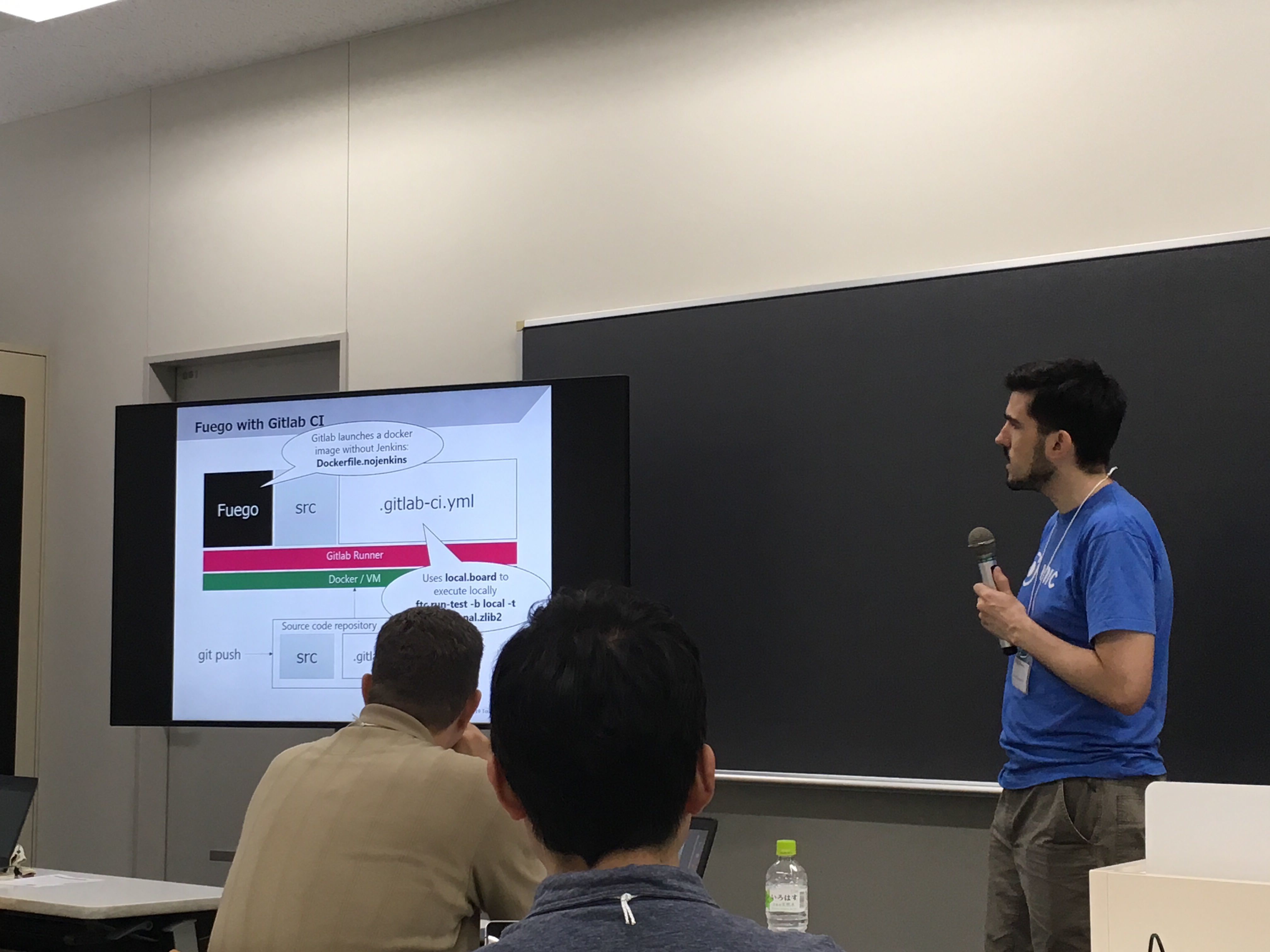

| 10:00-10:50 | Daniel | Fuego interaction with other systems | Media:20190720_fuego-jamboree-no3_daniel-sangorrin.pdf |

| 10:50-11:00 | BREAK |

BREAK |

BREAK |

| 11:00-11:30 | Tim | Fuego Issues discussion | Media:FJ3-Fuego-Issues-Discussion-2019-07.pdf |

| 11:30-12:00 | Daniel | Fuego simple provisioning | Media:20190720_fuego-jamboree-no3_ideas_daniel-sangorrin.pdf |

| 12:00-13:00 | LUNCH |

LUNCH |

LUNCH |

| 13:00-13:40 | Motai-san | Capturing timing data during LTP tests | Media:OSSJ19_Automated run-time regression testing with Fuego_HirotakaMotai-190718g.pdf |

| 13:40-15:20 | . | Feature/bug hacking (topic TBD) | no slides |

| 15:20-15:30 | BREAK |

BREAK |

BREAK |

| 13:30-16:00 | Tim | Roadmap, Priorities and Wrap-up | Media:FJ3-Fuego-roadmap-priorities-2019-07.pdf |

Session descriptions [edit section]

1.5 Feature Review [edit section]

This session will cover features in the 1.5 release, describing their purpose and completion level, and details about how they work.Presenter: Tim

Fuego interactions with other systems (Daniel) [edit section]

Daniel will present his work on using Fuego in combination with LAVA, Linaro tests (LKFT), ktest, Squad and Gitlab CI.

Issues Discussion (Tim) [edit section]

In this session, we will discuss (in a Birds-of-a-Feather format) problem areas that Fuego has, and how we might address them.

Fuego simple provisioning proposal [edit section]

Discussion leader: DanielDaniel will discuss his proposal to add a simple provisioning system to Fuego.

Capturing timing data for LTP tests [edit section]

Hirotaka Motai will discuss his system for checking for system call duration regressions, using LTP_one_test and strace.

Notes [edit section]

Can convert Functional.LTP_one_test to measure syscall duration using strace in a wrapper script.To see the timing data in the results, you could make the test a Benchmark, and use a parser.py to extract the duration data from the strace log

Issues:

- this won't work for syscalls like read() that are used too frequently outside the actual test code

- easier to time the duration of the entire test case, than individual syscalls

- these test results will vary per architecture, or gcc version

- this will break the parsing (the parsing will be very brittle)

- could replace 'runtime-logger.sh' in the report command with macro, that could be replaced with with 'strace', 'time', etc.

- maybe put something in the target-side library to start and stop a timer, and emit it into the testlog so Fuego can measure timing on all tests

- One of the LTP testrunners already measure the duration of each test program (don't remember which one)

Hacking/bug fix session (group) [edit section]

In this session, we'll try to actually add a feature or fix a bug in Fuego, using our time together to help develop, test and commit the new code.

Roadmap, Priorities and wrap-up (Tim) [edit section]

In this session, we'll summarize the set of things people are most likely to work on next, and who's working on them.test concurrency:

- there's a problem with the monitor and stressor output.

- when running a normal test in the background, nothing prevents the monitor or stress output from getting intermixed with the test output (log)

- maybe add a flag to make a monitor or stress or test quiet

- when running a normal test in the background, nothing prevents the monitor or stress output from getting intermixed with the test output (log)

- you need to be able to start and stop the stressors (that's already covered) -

Notes for discussion of Roadmap and Priorities [edit section]

Place notes here during or after the meeting

Priorities [edit section]

Place notes here during or after the meeting

provisioning discussion notes [edit section]

Daniel suggested:- adding primitives to ftc for

- expect - to drive serial port request/response interactions

- sync - to copy files to the target or tftp area

- exec - to execute a command on the target board

- provision - to ???

(These are like ttc commands: cp, cmd)

"Interactive" is the name LAVA uses for a declarative expect-like feature to drive firmware control over serial port with yaml file definition.

Tim raised the idea of using Fuego tests as then sub-steps for provisioning.

example:

- Functional.build_kernel

- Functional.put_kernel_on_board

- Functional.reboot

- Functional.batch_provision - that calls the above in sequence

Daniel noted that this mixes testing and provisioning, which is confusing. Also, there are lots of phases in a fuego test, that are inappropriate if you just want to perform the provisioning. We should only use Fuego tests for things that are actually tests, not steps in another Fuego sub-process. (ie - Provisioning should have its own system, like labgrid, LAVA, or something else)

Motai-san is doing the image building phase in Jenkins, outside of Fuego

- is provisioning manually

Image preparation/provisioning consists of:

- build step - build the image (could be jenkins)

- deploy - put the image to an artifact server (could be jenkins)

- provision - put image from artifact server to board

- test

Wang asked about executing a command on target, receiving information back, and acting on the information received.

Attendees [edit section]

Please add your name to this list if you plan to attend the meeting.

Note - the 'Attended meeting' field will be filled out at the meeting, please leave that field blank

| Name | Company | Attended meeting | |

| Tim Bird | Sony | tim.bird (at) sony.com | yes |

| Daniel Sangorrin | Toshiba | daniel.sangorrin (at) toshiba.co.jp | yes |

| Shinsuke Kato | Panasonic | kato.shinsuke (at) jp.panasonic.com | yes |

| Teppei Asaba | Fujitsu | teppeiasaba (at) fujitsu.com | yes |

| Wang Mingyu | Fujitsu | wangmy (at) cn.fujitsu.com | yes |

| Lei Maohui | Fujitsu | leimh (at) cn.fujitsu.com | yes |

| Keiya Nobuta | Fujitsu | nobuta.keiya (at) fujitsu.com | yes |

| Hirotaka MOTAI | Mitsubishi Electric | motai.hirotaka (at) aj.mitsubishielectric.co.jp | yes |

| Pintu Kumar | Sony | pintu.kumar@sony.com | yes |

| Norio Kobota | Sony | norio.kobota (at) sony.com | yes |

| Nobuyuki Tanaka | Sony | No.Tanaka (at) sony.com | yes |

| Takuo Koguchi | Hitachi | takuo.koguchi.sw (at) hitachi.com | yes |

| Harunobu Kurokawa | Renesas | harunobu.kurokawa.dn (at) renesas.com | yes |

Photo [edit section]